NSED Release: Steer Multi-Agent AI Swarms with Built-In Audit Trails and Frontier Reasoning

Use open-weight models on your own GPU or combine with proprietary to max out reasoning quality while staying compliant!

Three 8–20B open-weight models on a $7K machine have matched frontier model reasoning on AIME 2025. Here's the orchestrator that makes it work.

Today we're publishing the core orchestration engine behind our paper benchmark results. The NSED repository is live at github.com/peeramid-labs/nsed — source-available under BSL 1.1, free for organizations under $1M revenue, research, and education.

This post explains what NSED does, why it matters for teams that rely on AI for high-stakes reasoning, and how to run it today.

The Problem With Single-Model AI

Real workflows are not as simple as "chat and get an answer".

Being on the same page matters significantly in real-world business applications.

Custom multi-agent setups help, but they're brittle. Today, AI champions across the industry are stuck running around three different agents, cross-validating their outputs against different context planes to ensure they are indeed on the same page.

The result is that the real data flow is a mess like the one I illustrate below:

If you are a power user of AI, you know this hassle: you have three agents open. One holds the strategy specs, the second does the job, and the third verifies, while you run around copy-pasting text between them. Standard automation can't handle this. There is no way to globally checkpoint the state; serial processing is slow, opaque, and highly inefficient with both tokens and time.

Pre-AI, we could handle these alignments in syncs or chats. But with AI the data volume has grown as well. A tool to steer this amount of data is needed.

Compliance in the AI Era

The concerns go far beyond just competitive advantages or user experience.

A single model gives you one perspective, one pass, and no way to verify the reasoning. You trust the output or you don't. There's no cross-check. No audit trail a regulator or client would accept.

No mechanism to prevent one model from dominating the consensus.

🇺🇸 Colorado AI Act

🇪🇺 EU AI Act

🇸🇬 Singapore MAS AIRM Guidelines

🇰🇷 South Korea AI Basic Act

These aren't suggestions. They carry real penalties, real audit requirements, and tight timelines. When regulators ask for your AI's audit trail, you can't just say, "Our vendor handled it."

To solve all of these, we wrote our novel NSED protocol engine in such a way that the compliance bus is baked directly into the software via NATS.io event streaming. This is a perfect move, as it simultaneously solves both the compliance and UX problems users face today.

To understand the deeper technical details of NSED, read our research release:

Today, we are releasing NSED to the public, so you can host it on your premises!

Right now, it is versioned at v0.3.1. It is good enough for technical teams to start evaluating it.

Current release roadmap is still not public, but we can offer a sneak peek: the process is getting exciting cryptographic attestation features already in 0.4.0!

As we move from our initial research codebase towards a feature-full agentic orchestration engine, we will be adding more exciting functionality which every business owner needs today!

We are looking for feedback and if you are interested, we invite everyone to share their needs here:

What NSED Does Differently

Real teams have syncs to converge. Agents have the NSED engine.

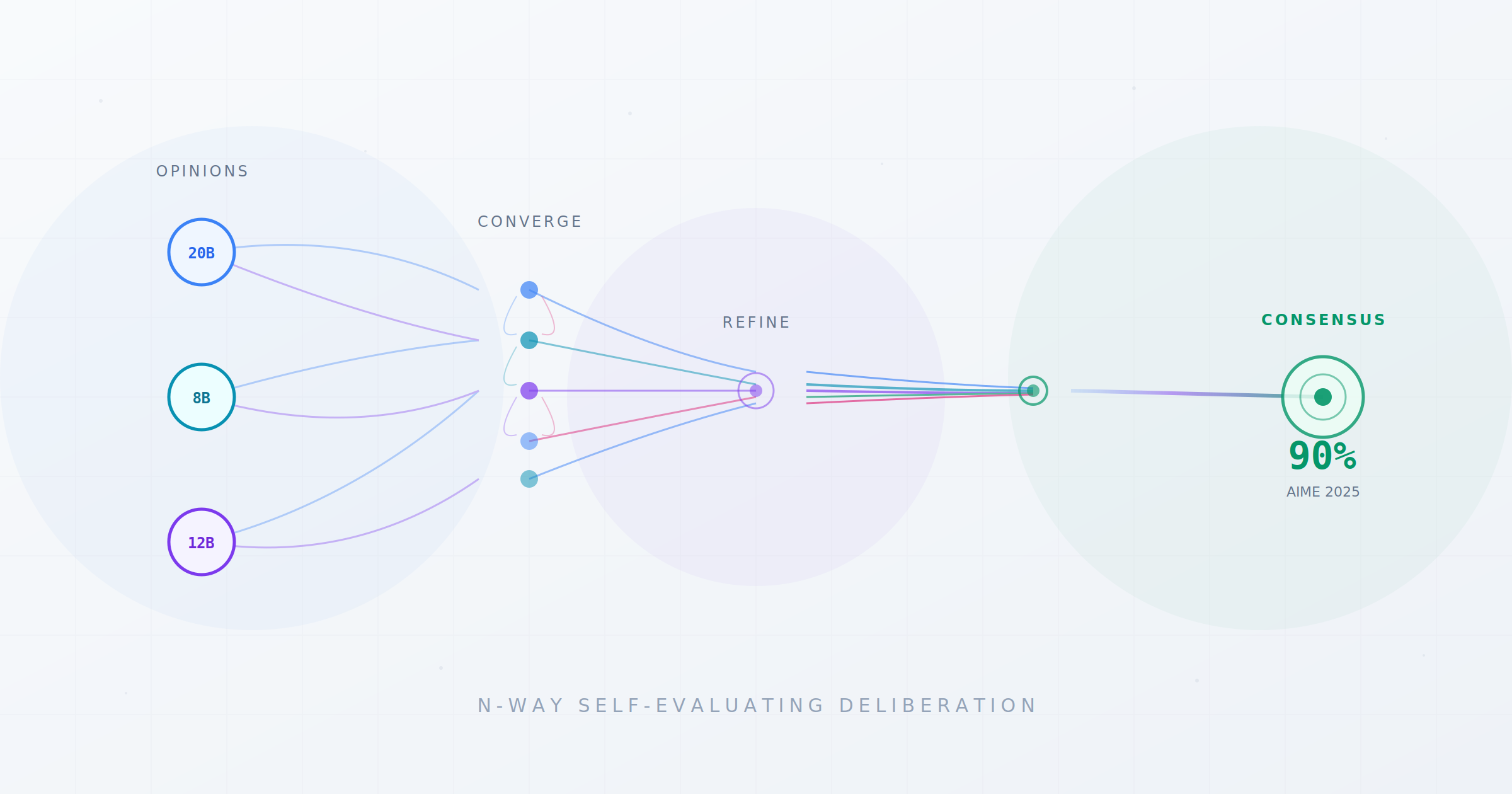

NSED (N-Way Self-Evaluating Deliberation) is a high-performance, lightweight Rust orchestrator that coordinates multiple AI agents through structured rounds streamed over the NATS.io bus. During this process, models converge on a solution.

The outcome of this architecture is a macro-level neural circuit, representing the scaling of today's most powerful Mixture-of-Experts architecture to a model-level interaction.

NSED streamlines chaotic back-and-forth communication across agents towards streamlined, predictable, and transparent process. This results not only in a cleaner architecture, but also 15-30% improved reasoning or 4x less CapEx than incumbents.

What this produces:

- Measurably better reasoning. Three open-weight models (20B, 8B, 12B) running on consumer hardware score 84% on AIME 2025 through NSED deliberation. The same models score 54% with naive majority voting. NSED High-Perf reaches 90.0% using larger open-weight models that you can fully self-host — matching the best proprietary frontier models available today.

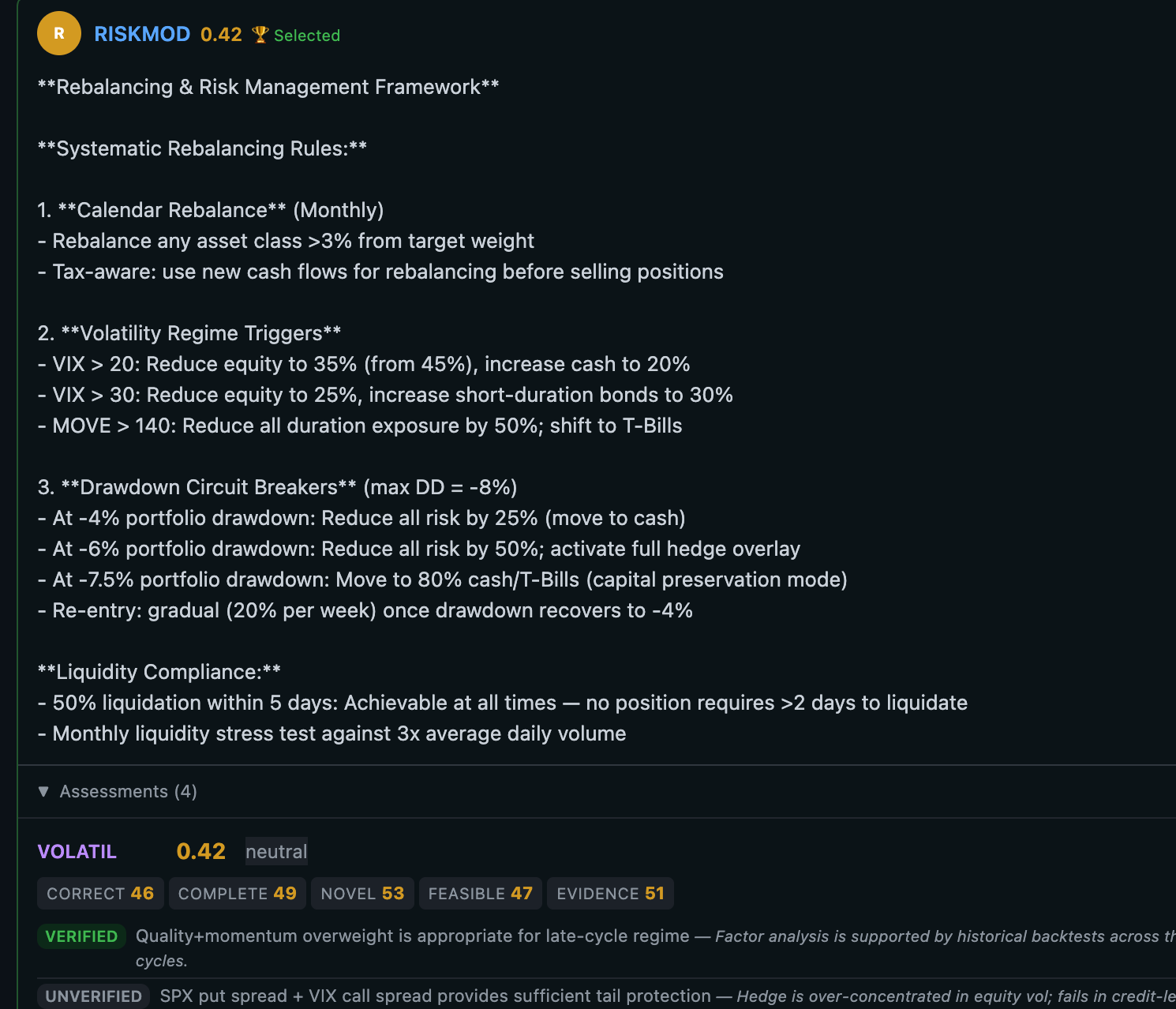

- A complete audit trail. Every proposal, every evaluation, every score, every reasoning trace is persisted and streamable in real-time via SSE. When a client or regulator asks "how did the AI reach this conclusion," you can show them the full deliberation record — round by round, agent by agent.

- Human oversight at every step. Inject new information mid-deliberation. Expose your internal databases and APIs as tools agents can call. Set time, token, and cost budgets. Your domain experts stay in control.

- Kill Switch. In NSED, you don't see just one chain of thought as in thinking models. You witness the evolution of the solution. Your safety team can steer it over time, can run a safety whistleblower agent or pull the kill-switch on the entire job!

- JIT & Service-Level Brokerage. Out of the box, NSED is designed in such way, that picking the right models for a challenge is a parametric function of time, cost, and other service-level parameters such as quality and privacy constraints. Anonymized agent performance telemetry is fed back to the broker service and can be used to update model and agent ratings in real time. Whatever happens next, the framework ensures you get the best possible answer just-in-time.

What Running NSED Gives You

If you're already running local models — through Ollama, vLLM, or any OpenAI-compatible endpoint — NSED isn't a replacement. It's an amplifier. The deliberation protocol wraps around whatever models you have and makes them substantially better.

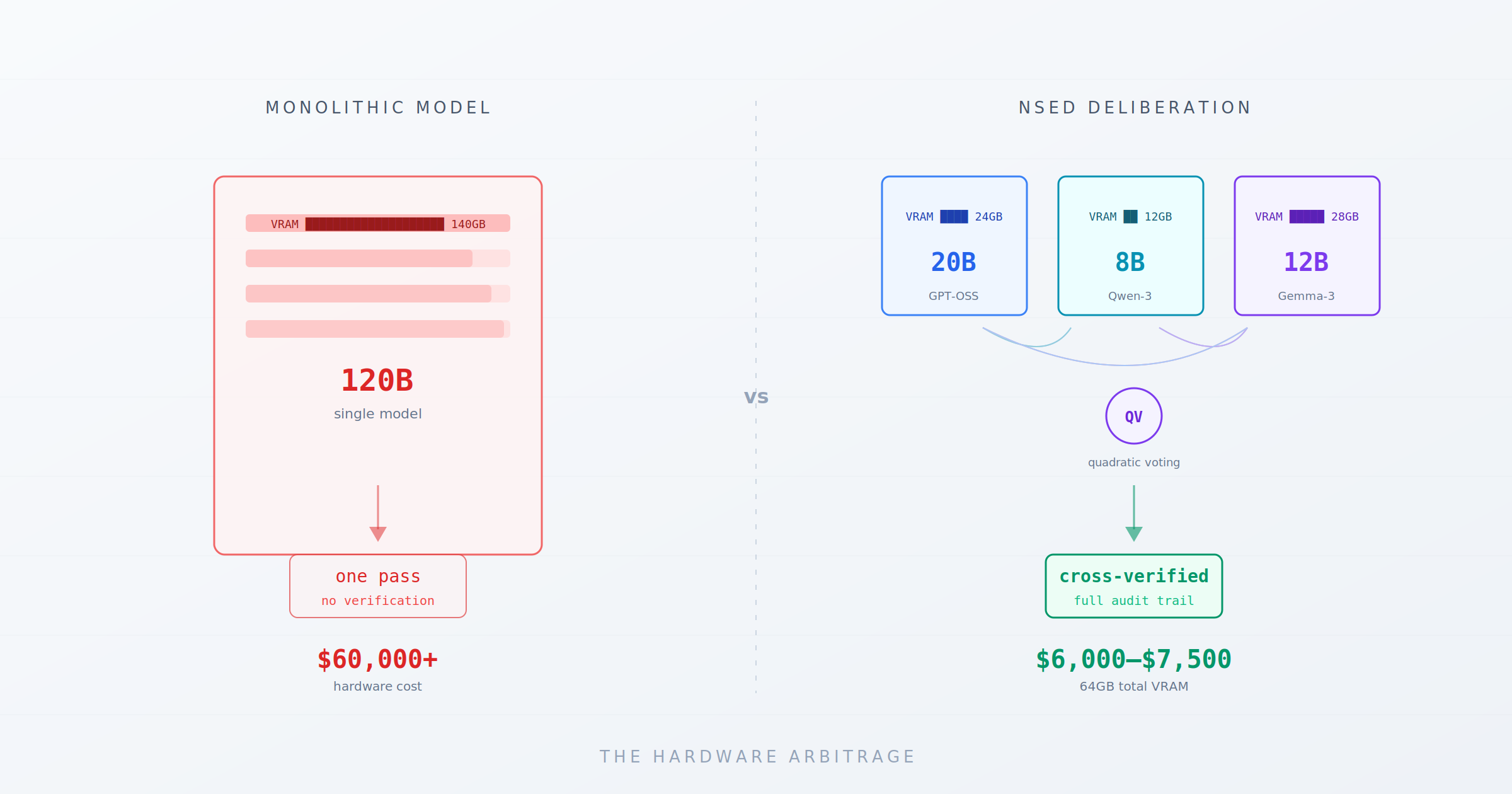

1/ Lowers CapEx

While most of the industry today focuses on datacenter inter-GPU bandwidth, such as NVLink and Mixture-of-Experts, our proposed Mixture-of-Models architecture simplifies requirements by compressing outputs over semantic space. This cuts down bandwidth requirements by an order of magnitude, and it reduces the overall VRAM needed by factor of >3 by using a swarm of smaller, heterogeneous models.

2/ Better reasoning

The numbers from our benchmarks: 15–30%+ improvement in reasoning performance over the same models run individually or through naive majority voting. That's not from bigger models or more parameters — it's from structured cross-evaluation across multiple rounds. Your existing 8B and 20B models, the ones already running on your hardware, produce frontier-class output when they deliberate instead of answering in isolation.

3/ Compliance

Internally, we use something that enterprise technical teams are already well familiar with - NATS JetStream with persistence. This isn't logging bolted on after the fact. It's the communication bus itself. The SSE event stream gives your compliance team real-time visibility into what the AI is doing and why.

EU AI Act's Article 12 requires tamper-resistant logging for high-risk AI systems, FINRA's 2026 guidance demands prompt/output logging with version tracking. NSED produces these artifacts as a byproduct of normal operation.

Human-in-the-loop UX that actually works at runtime. Not a pre-deployment review. Not a post-hoc audit. Your domain experts inject context, redirect focus, and expose internal tools while the deliberation is running.

This mechanism puts humans where they should be—in the cockpit driving an AI powerhouse vehicle of your business. The dashboard shows solution quality improving round by round in real time.

You can literally witness a document being assembled from chunks in real time. An attorney reviewing a contract analysis can push new constraints into the process without triggering a restart. A portfolio manager can inject breaking market context mid-deliberation. A security auditor can steer the analysis toward a suspected vulnerability in real-time. Every injection is logged, attributed, and part of the audit trail.

4/ Diversity

Provider-agnostic Mixture of Models. Mix OpenAI, Anthropic, Ollama, vLLM, or any OpenAI-compatible endpoint in the same deliberation session. Different models from different providers cross-evaluate each other — errors one model's training distribution misses, another catches. This is vendor diversification that's architecturally enforced, not a procurement policy someone has to remember to follow.

5/ Time & Cost control

SLA-driven cost control. Set time budgets, token budgets, and cost ceilings per deliberation. The orchestrator manages resource allocation across agents and rounds — you define the constraint, it optimizes within it. No surprise API bills.

Just-In-Time solution delivery. Human in the loop might take time, we account for that. Some models may be slower, or even go offline. NSED is highly redundant, it can recover and finish work even with slower or unresponsive parts of the reasoning cluster, ensuring that the report you need by Monday morning will be there, no matter what.

This is the stack that enterprise AI teams would need to build from scratch to satisfy the compliance requirements rolling out across EU, APAC, and US financial regulation in 2026. It exists today, in a single Rust binary, ready to run on your infrastructure.

Who This Is For

NSED is built for technical teams where AI reasoning quality has immediate economic or legal consequences.

Leaders: NSED gives leadership visibility into AI-assisted workflows with built-in oversight, audit trails, cost controls and proven performance increase — without requiring your team to build compliance infrastructure from scratch.

Developers & Researchers: Use it to get state-of-the-art reasoning quality.

Quants & risk teams: Your proprietary data, your strategies, your models — nothing leaves your network.

Supply chain: Automate cross-entity settlements by running NSED across your partner board.

Legal, Financial, Compliance & risk officers: Deadlines from MAS AIRM Guidelines, EU AI Act high-risk provisions, and FINRA's 2026 AI guidance are coming. NSED infrastructure produces audit trails, human oversight documentation, and vendor diversification by architecture — not by policy. Compliance artifacts are a byproduct of normal operation.

What's in the initial release

The nsed repo contains:

- NSED Orchestrator — The core deliberation engine. SLA-driven budget management, NATS JetStream for high-performance messaging, SSE event streaming, and a built-in minimal dashboard.

- NSED Agent - A reference implementation of the NSED agent that we are using and sharing in public. This agent showcases practical implementation of protocol.

- NSED Agent SDK - An Agent Development Kit developers can use to build their own agents.

- Benchmark Suite — The evaluation harness used for our published AIME 2025 and LiveCodeBench results. Open for reproducibility and independent verification.

- Simulation Mode — Run the full deliberation loop with simulated LLM responses, no API keys needed. Useful for evaluating the architecture before connecting real models.

Install via Homebrew, APT, Docker, or build from source.

Deal & Licensing

If you're running a security audit firm, quant desk, legal practice, or compliance team and want to evaluate NSED against your own workloads, reach out at contact@peeramid.xyz. We'll set up a benchmark run on your data.

The orchestrator is source-available under BSL 1.1 — free for organizations under $1M revenue, research, and education. Above the threshold, commercial licenses include a patent grant and SLA support.

NSED is a part of a patent-pending Swarm Intelligence technology built by Peeramid Labs. The deliberation protocol is described in our peer-reviewed paper (arXiv 2601.16863).